The current state of generative media often promises a “magic box” experience where a simple text string produces cinematic results. However, anyone operating at a professional or high-utility level knows that text is the weakest point of control. In the transition from static imagery to motion, the dependency on the initial input—the “source asset”—becomes the single most important factor in the success of the output.

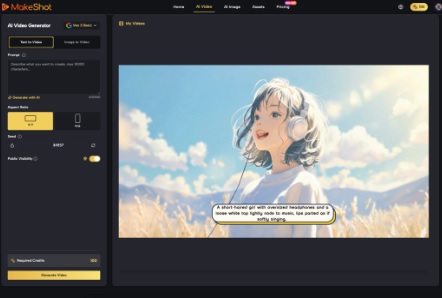

When working with an AI Video Generator, the prompt acts more like a suggestion, while the first frame acts as the blueprint. If that blueprint is structurally unsound, no amount of compute power or prompt engineering can save the sequence from temporal decay or visual “hallucinations.” Achieving high-fidelity video requires moving away from the “lottery” mindset of text-to-video and toward a disciplined image-to-video (I2V) workflow.

The Shift from Prompting to Asset Engineering

Text-to-video is inherently chaotic. Because the model must simultaneously imagine the subject, the environment, and the physics of motion all at once, the probability of error is high. We see this in “morphing” artifacts, where a person’s face subtly shifts between frames or background architecture melts.

By providing a high-quality source image first, you solve 50% of the model’s problems. You have already defined the lighting, the color palette, the character’s geometry, and the spatial relationships within the frame. The AI Video Generator is then tasked only with the “delta”—the change between the current frame and the next. This significantly reduces the cognitive load on the neural network, leading to much more stable motion.

However, this approach introduces a new bottleneck: the quality of that first frame. If the source image has even minor compression artifacts or anatomical inconsistencies, the video generation process will amplify them. In a static image, a slightly blurred background might look like stylistic bokeh; in a video, the AI may interpret that blur as smoke, water, or a shifting physical mass, leading to unwanted “jitter.”

Why the First Frame is the ‘Genetic Code’ of Your Shot

In a standard I2V pipeline, the AI uses the first frame as its ground truth. It analyzes the pixels to understand the “depth” of the scene. It asks: What is foreground? What is background? What is likely to move?

Compositional choices like “leading lines” or “shallow depth of field” are not just aesthetic preferences; they are instructions for the AI’s spatial reasoning. For instance, an image with a clear separation between a sharp subject and a blurred background tells the AI Video Generator exactly where the focus of motion should be. Conversely, a “busy” wide shot with equal detail across the entire frame often confuses the model, leading to “everything-moving-at-once” syndrome, where the ground ripples or trees sway with unnatural, liquid-like physics.

One major limitation remains: the model’s “memory” of this first frame is not infinite. As a video progresses beyond the 3- or 4-second mark, the “genetic drift” of the pixels becomes more pronounced. Even with a perfect source asset, the model may eventually lose track of the original geometry, leading to a breakdown in consistency.

The Technical Debt of Low-Quality Source Assets

Low-resolution or highly compressed source assets are the primary cause of “latent noise” in AI video. When an AI Video Generator processes an image, it doesn’t just see a picture; it sees a mathematical representation of noise and patterns.

If you use a low-quality screenshot or an upscaled web image as your source, the AI often perceives pixelation as texture. This results in “boiling” artifacts—a shimmering effect across surfaces that should be solid. To avoid this, creators must ensure that the source image is generated at or above the target video resolution.

Using a dedicated AI Image Generator to craft the first frame—with specific attention to clean edges and high-contrast lighting—is essential. This “pre-processing” step allows the video model to focus its attention on fluid motion rather than trying to sharpen a blurry input.

Compositional Logic: Helping the Model Understand Physics

Certain compositions are “friendlier” to AI Video Generator models than others. This is an area where traditional cinematography knowledge pays dividends.

1. Foreground/Background Separation: Use clear silhouettes. If a character’s arm overlaps too closely with a similarly colored background object, the AI will likely “fuse” them during motion. Creating a clear visual gap between the subject and the environment prevents these merging artifacts.

2. Lighting Consistency: AI models generally struggle with complex light reflections on moving surfaces (like water or glass). A source image with simple, directional lighting is easier for the model to maintain than one with multiple colorful light sources or strobe effects.

3. The “Horizon” Anchor: Including a stable, horizontal reference point helps the AI maintain a consistent camera plane. Without a clear horizon or ground plane, the camera “drone” or “pan” effects often feel like they are tilting or drifting off-axis.

It is worth noting that even with perfect composition, the current technology still struggles with “occlusion”—when one object passes behind another. If a person walks behind a tree in your video, the AI may struggle to remember what the person looked like when they emerge on the other side. This is an inherent uncertainty in current diffusion-based architectures.

Where the Process Still Fails: The Limits of I2V

Despite the control offered by I2V workflows, there are specific scenarios where the AI Video Generator will almost certainly fail, regardless of how good the first frame is.

Complex human locomotion—like running or climbing—requires a deep understanding of skeletal mechanics that many current models lack. If your source image features a person in a complex, multi-jointed pose, the AI might struggle to figure out which limb moves in which direction, leading to “extra legs” or impossible joint rotations.

Similarly, specific text or signage within a scene remains a challenge. If your source frame has a sign that says “CAFE,” the AI might hold that text for two seconds, but as the camera moves, the letters will likely warp into unrecognizable glyphs. Expectation-reset is necessary here: use AI for mood, atmosphere, and organic motion, but rely on traditional VFX or editing for precision elements like text or specific character actions.

Operationalizing the Workflow

To get the most out of an AI Video Generator, creators should treat the process as a multi-stage production rather than a single click.

First, generate a high-resolution base image. Don’t settle for the first result; look for a frame that has “motion potential”—a pose that implies a direction or an environment that has depth.

Second, evaluate the image for “artifacts” that might confuse the video model. Check for overlapping limbs, floating objects, or nonsensical shadows. Fixing these in an image editor before sending them to the video stage will save hours of failed video generations.

Third, use the prompt in the video stage only to describe the *movement*, not the *subject*. If your image already shows a man in a forest, your video prompt shouldn’t say “A man in a forest.” Instead, it should say “Slow tracking shot, the man turns his head slightly to the left, leaves rustle in the background.” This focuses the model’s compute on the motion parameters rather than re-interpreting the scene.

Final Thoughts for Creators

The “editor’s eye” is becoming more valuable than the “prompter’s vocabulary.” As the industry moves toward more sophisticated tools, the ability to judge the structural integrity of a single frame will determine the professional quality of the resulting video.

The transition from AI-generated novelty to AI-generated utility happens when we stop asking the model to “make something cool” and start giving it the specific visual data it needs to succeed. By prioritizing the first frame, respecting the limits of temporal consistency, and providing high-resolution source material, you turn the AI Video Generator from a random content machine into a precise tool for production.

Success in this space isn’t about finding the “perfect prompt.” It’s about engineering the perfect starting point and understanding that in the world of generative video, the first frame is the only frame that truly matters.